LLMs Can Unmask Pseudonymous Users at Scale with Surprising Accuracy

- LLMs can achieve up to 68% recall and 90% precision in deanonymizing users.

- Cross-platform analysis of social media data enhances the effectiveness of LLMs.

- Mitigation strategies include enforcing rate limits and monitoring for misuse of AI models.

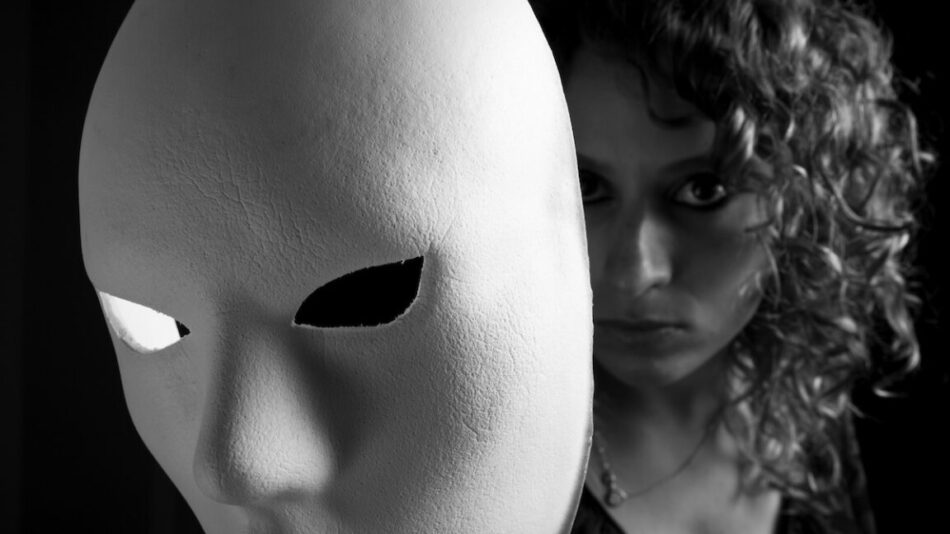

The rise of large language models (LLMs) has revolutionized various sectors, but their implications for online privacy are profound. Recent research indicates that LLMs can effectively unmask pseudonymous users, raising critical concerns about privacy and security in the digital realm.

This article delves into the findings of this research, exploring the methodologies employed, the implications for pseudonymity, and the potential strategies for mitigating risks associated with these powerful AI tools.

Continue Reading

Understanding Pseudonymity in the Digital Age

Pseudonymity has long been considered a shield for privacy on the internet. Users often believe that operating under a pseudonym protects them from identification and unwanted scrutiny. However, the advent of LLMs has challenged this notion, revealing that pseudonymity may not be as robust as previously thought.

Research indicates that LLMs can analyze data across multiple social media platforms to link pseudonymous accounts with real identities. This capability poses significant risks for individuals who rely on pseudonyms for privacy, especially in sensitive discussions.

Research Findings on LLMs and Deanonymization

A recent study highlighted the remarkable capabilities of LLMs in deanonymizing users. The researchers conducted experiments that correlated specific individuals with their accounts or posts across various social media platforms, achieving a recall rate of up to 68% and a precision rate as high as 90%. These results far exceed those of traditional deanonymization techniques.

Methodologies Employed

The researchers utilized several datasets from public social media sites, employing a pseudonymous stripping framework to test their techniques. Key methodologies included:

- Cross-Platform References: By linking posts from platforms like Hacker News and LinkedIn, the researchers could identify users more effectively.

- Micro-Identities: Utilizing Netflix’s release of micro-identities, the team demonstrated how preferences and transaction records could reveal personal information.

- Reddit History Analysis: By analyzing a user’s Reddit comments, the researchers could deanonymize individuals based on their interactions and shared interests.

Case Studies and Experiments

In one experiment, the researchers analyzed responses from a questionnaire about AI usage. They successfully identified 7% of participants based solely on general information provided, showcasing the potential of LLMs to extract identity signals from seemingly innocuous data.

Another experiment focused on Reddit users, where the researchers found that the more movies a user discussed, the easier it was to identify them. For instance, identifying users who shared comments about a single movie yielded a 90% precision rate.

Furthermore, the study compared LLM-based deanonymization techniques against traditional methods, such as the Netflix prize attack. The results indicated that LLMs significantly outperformed classical approaches, achieving better recall and precision even with less structured data.

Implications for Online Privacy

The findings from this research have far-reaching implications for online privacy. The ability of LLMs to deanonymize users challenges the fundamental assumption that pseudonymity provides adequate protection. Users may be exposed to risks such as doxxing, stalking, and the creation of detailed marketing profiles.

As LLMs continue to evolve, their capacity to identify individuals based on minimal information will likely increase, further eroding the effectiveness of pseudonymous accounts.

Mitigation Strategies

Given the potential risks associated with LLMs and deanonymization, it is crucial to explore strategies for mitigating these threats. Some proposed measures include:

- Rate Limits on API Access: Platforms should enforce strict rate limits on API access to user data to prevent automated scraping.

- Detection of Automated Scraping: Implementing systems to detect and block automated scraping activities can help protect user privacy.

- Monitoring for Misuse: LLM providers should actively monitor for misuse of their models in deanonymization attacks and develop guardrails to prevent such activities.

- User Education: Educating users about the risks of pseudonymity and encouraging them to regularly delete posts can enhance their privacy.

Future Considerations

As LLMs become more sophisticated, the landscape of online privacy will continue to evolve. Researchers and developers must remain vigilant in understanding the implications of these technologies and work collaboratively to develop effective solutions.

Furthermore, policymakers should consider regulations that address the challenges posed by AI-driven deanonymization, ensuring that users’ rights to privacy are protected in the digital age.

Frequently Asked Questions

LLMs analyze data across various social media platforms, linking pseudonymous accounts with real identities through cross-platform references and behavioral patterns.

The primary risks include doxxing, stalking, and the creation of detailed marketing profiles, which can expose personal information of users who rely on pseudonyms for privacy.

Mitigation strategies include enforcing rate limits on API access, detecting automated scraping, monitoring for misuse of LLMs, and educating users about privacy risks.

Call To Action

As the landscape of online privacy continues to evolve, consider implementing robust privacy measures and staying informed about the implications of AI technologies.

[np_contact_btn]

Note: Provide a strategic conclusion reinforcing long-term business impact and keyword relevance.