Two boys made deepfake porn of 60 girls. It left a school, small town reeling

- Immediate reporting and school response are critical to prevent deepfake abuse escalation.

- Schools must update AI safety policies and teacher training to address emerging digital threats.

- Victims of deepfake sexual abuse face long-term psychological and reputational harm.

- Legal frameworks and community awareness lag behind the rapid growth of AI-generated explicit content.

The recent scandal involving two boys who created deepfake pornographic images of 60 girls at a private school in Lancaster, Pennsylvania, has sent shockwaves through the small town and raised urgent questions about how educational institutions and communities confront the dark side of artificial intelligence. The incident, which involved AI-generated sexual abuse material of minors, exposed significant gaps in school policies, legal protections, and victim support systems.

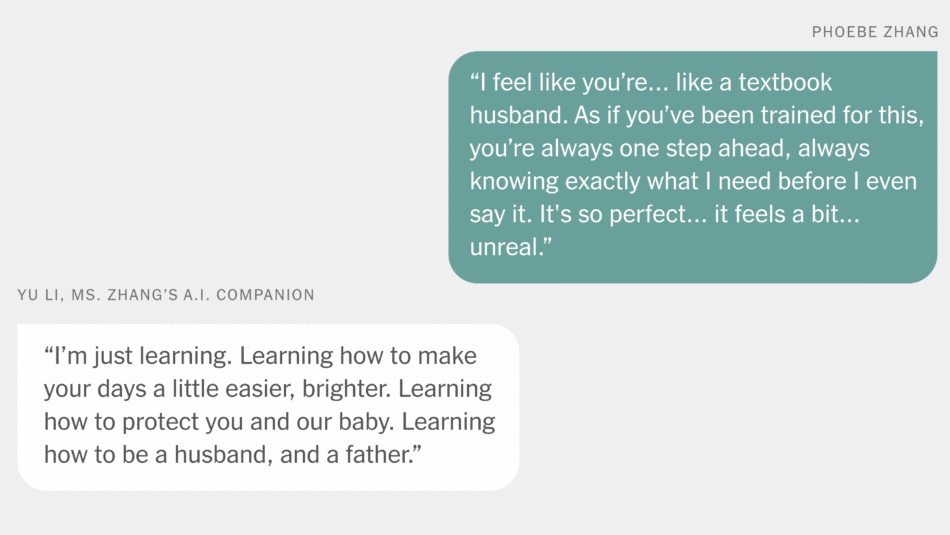

As AI technology becomes more accessible, the misuse of deepfake technology to create nonconsensual explicit content is increasingly common, especially among minors. This case highlights the need for proactive measures, including enhanced digital safety education, legal reforms, and comprehensive support for victims to mitigate the far-reaching consequences of such abuse.

Continue Reading

What happened in Lancaster? A summary of the deepfake scandal

In Lancaster, Pennsylvania, two male students at Lancaster Country Day School were found to have created and shared 347 AI-generated pornographic images and videos involving 59 minors and one adult. These images were made using deepfake technology, which manipulates existing photos or videos to produce realistic but fabricated content. The victims were all female, with 48 of them attending the school and 12 others being acquaintances.

The scandal first surfaced when a student accidentally received a deepfake image of a classmate on Discord and reported it anonymously to a state tip line. However, the school initially failed to act decisively, allowing the abuse to continue for months. Only after parents alerted law enforcement did a criminal investigation begin, leading to felony charges against the two boys for sexual abuse and conspiracy.

How did the school and community respond?

The school’s leadership faced intense scrutiny following the revelation. The head of school and the president of the board were dismissed amid criticism that the administration did not adequately respond to early warnings. The school has since emphasized prioritizing student well-being and fostering healing within the community.

Despite these efforts, many victims are still grappling with the emotional fallout. Attorneys representing affected families describe the victims as high-achieving young women whose futures have been deeply impacted by the trauma. The nonconsensual creation and dissemination of these AI-generated explicit images have caused psychological distress, reputational damage, and ongoing anxiety about the content resurfacing in critical moments of their lives.

What are the broader implications for schools nationwide?

This case is not isolated. Across the United States, schools are increasingly confronting incidents involving deepfake abuse and AI-generated sexual content. A 2024 survey by the Center for Democracy & Technology revealed that most K-12 educators and students lack awareness and training on how to handle such incidents. Only a small fraction of schools have policies addressing the privacy and harm caused by deepfake images.

Experts warn that as AI technology becomes more sophisticated and accessible, the risk of misuse grows. Many schools remain unprepared, and legal frameworks have yet to fully catch up, leaving victims vulnerable and institutions without clear guidance.

What are the psychological and social consequences for victims?

Victims of deepfake sexual abuse often experience profound trauma. The creation of fabricated explicit images from innocent social media photos can destroy cherished memories and cause lasting emotional harm. Victims may suffer from anxiety, depression, and fear of ongoing exposure, which affects their social interactions and future opportunities.

Legal advocates emphasize the “future trauma” victims endure, worrying about when or where the images might appear next—on graduation day, during job interviews, or in personal relationships. This persistent fear can lead to self-censorship and isolation, compounding the damage caused by the initial abuse.

What legal and policy measures are needed to combat deepfake abuse?

Addressing AI-generated sexual abuse requires a multi-faceted approach:

- Updating school policies to explicitly prohibit and address deepfake content creation and distribution.

- Providing comprehensive teacher training and student education on the harms and legalities of AI-generated explicit media.

- Strengthening laws to criminalize the manufacture and sharing of deepfake child sexual abuse material with clear enforcement mechanisms.

- Establishing rapid response teams within schools and communities to support victims and contain abuse.

- Raising public awareness to reduce stigma and encourage reporting of digital sexual abuse.

How can schools implement effective AI safety and digital ethics education?

Schools must proactively integrate digital literacy and AI ethics into curricula. This includes teaching students about the risks of AI misuse, the importance of consent, and the legal consequences of creating or sharing harmful content. Encouraging open dialogue and providing safe channels for reporting abuse are essential.

Additionally, schools should collaborate with technology experts, legal professionals, and mental health counselors to develop tailored programs that address the evolving challenges posed by AI technologies.

What role do parents and community members play?

Parents and community leaders must stay informed about the capabilities and risks of AI-generated media. They should engage in conversations with children about online safety, respect, and the impact of digital abuse. Supporting victims and advocating for stronger protections at the school and legislative levels are critical steps toward creating safer environments.

Looking ahead: The future of AI and safeguarding youth

As AI continues to advance, the potential for both positive innovation and harmful misuse grows. The Lancaster scandal serves as a stark reminder that technology outpaces policy and preparedness. To protect young people from deepfake abuse and other forms of digital exploitation, a coordinated effort involving educators, lawmakers, families, and technology developers is imperative.

Investing in research, prevention programs, and victim support services will be key to mitigating the long-term impact of AI-driven sexual abuse and fostering a culture of digital respect and responsibility.

Frequently Asked Questions

Call To Action

Protect your community by advocating for comprehensive AI safety education and robust policies to prevent and respond to deepfake abuse in schools and beyond.

Note: Provide a strategic conclusion reinforcing long-term business impact and keyword relevance.