How AI Can Read Our Scrambled Inner Thoughts

- AI technologies are enabling real-time translation of thoughts into text, enhancing communication for individuals with speech impairments.

- Brain-computer interfaces (BCIs) are evolving, allowing for more accurate decoding of neural signals associated with speech.

- Commercialization of these technologies is on the horizon, promising significant impacts on how we interact with technology and each other.

The intersection of artificial intelligence and neuroscience is paving the way for groundbreaking advancements in communication. With the ability to decode our thoughts, AI is transforming the lives of individuals with speech impairments, offering them a voice where there was none. This innovation not only enhances personal communication but also opens new avenues for interaction with technology.

As researchers continue to refine brain-computer interfaces (BCIs), the implications for society are profound. The potential for AI to interpret our inner thoughts could revolutionize various fields, including healthcare, education, and personal communication, making it a critical area of focus for future development.

Continue Reading

The Evolution of Brain-Computer Interfaces

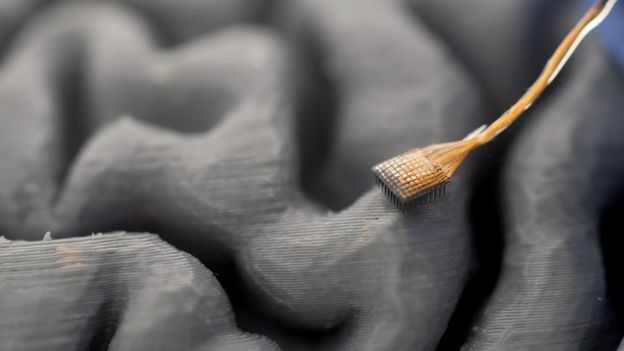

Brain-computer interfaces (BCIs) have been a subject of research for decades, with early experiments dating back to the 1960s. Pioneering work by neuroscientists like Eberhard Fetz demonstrated that animals could learn to control devices through neural activity. However, translating complex human thoughts into actionable data has remained a significant challenge.

Recent advancements have accelerated the development of BCIs capable of interpreting speech signals. For instance, a proof-of-concept study at Stanford University in 2021 allowed a quadriplegic participant to communicate by picturing himself writing letters in the air. This breakthrough laid the groundwork for future innovations in thought translation.

Decoding Inner Speech

One of the most exciting developments in BCI technology is the ability to decode “inner speech.” Researchers at Stanford University have made strides in this area by exploring whether they could capture the neural signals associated with thoughts, rather than just physical attempts to speak. This approach could drastically improve communication for individuals who cannot articulate their thoughts verbally.

In a recent study, participants were tasked with counting shapes on a screen while their brain activity was monitored. This method allowed researchers to decode their inner dialogue in real time, showcasing the potential for a more intuitive communication method.

How AI Translates Neural Signals

The process of translating thoughts into text involves sophisticated algorithms that analyze neural activity patterns. These algorithms, powered by machine learning, are trained to recognize the specific signals associated with different phonemes—the smallest units of sound in speech. By understanding these patterns, AI can effectively interpret what a person is trying to communicate.

Unlike traditional speech recognition systems that rely on audio input, these AI systems interpret neural signals directly from the brain. This innovative approach has the potential to create a seamless communication experience for individuals with speech impairments.

Commercialization and Future Implications

The commercialization of BCI technology is rapidly approaching, with companies like Elon Musk’s Neuralink leading the charge. As these technologies become more accessible, they promise to transform not only individual lives but also societal interactions. The ability to communicate thoughts directly could change how we engage with technology and each other.

Experts predict that within the next few years, we will see these technologies deployed at scale, potentially revolutionizing industries such as healthcare, education, and entertainment. The implications for personal communication are particularly significant, as individuals with disabilities gain new opportunities for expression.

Challenges and Considerations

While the potential benefits of AI-driven BCIs are immense, several challenges remain. The current technology often requires invasive procedures, such as implanting electrodes in the brain, which poses risks and ethical considerations. Additionally, the accuracy of thought translation can vary based on individual differences in brain activity.

Researchers are also exploring non-invasive methods to make these technologies more accessible. For instance, advancements in brain imaging techniques could allow for the interpretation of thoughts without the need for surgical intervention. However, achieving the same level of accuracy as invasive methods remains a significant hurdle.

Ethical Implications

The ability to read thoughts raises important ethical questions. Issues surrounding privacy, consent, and the potential for misuse of technology must be carefully considered as these innovations progress. Establishing clear guidelines and regulations will be crucial in ensuring that the technology is used responsibly and ethically.

Case Studies and Real-World Applications

Several case studies illustrate the transformative potential of AI in decoding thoughts. For example, a 52-year-old woman who had been paralyzed for 19 years participated in a study where her internal monologue was translated into text through a BCI. This breakthrough not only allowed her to communicate but also provided insights into the workings of the human brain.

Another notable case involved a patient with amyotrophic lateral sclerosis (ALS), who was able to communicate at a rate of 32 words per minute with 97.5% accuracy. These real-world applications demonstrate the profound impact that AI can have on enhancing communication for those with severe speech impairments.

Future Directions in AI and Thought Translation

The future of AI in reading our scrambled inner thoughts is promising. Researchers are continuously refining algorithms and exploring new methods for decoding neural signals. As technology advances, we can expect to see improvements in accuracy, speed, and accessibility.

Moreover, interdisciplinary collaboration between neuroscientists, engineers, and ethicists will play a crucial role in shaping the future of this field. By working together, these experts can ensure that the development of AI technologies aligns with societal values and ethical standards.

Frequently Asked Questions

Brain-computer interfaces (BCIs) are systems that allow direct communication between the brain and external devices. They can decode neural signals to enable individuals to control devices or communicate, particularly useful for those with speech impairments.

AI uses machine learning algorithms to analyze patterns of neural activity associated with thoughts. By recognizing these patterns, AI can translate brain signals into text or commands, facilitating communication for individuals with disabilities.

Ethical concerns include privacy, consent, and potential misuse of technology. As AI systems gain the ability to read thoughts, it is crucial to establish guidelines to protect individuals’ rights and ensure responsible use of these technologies.

Call To Action

Explore the transformative potential of AI and BCIs in enhancing communication. Stay informed about advancements in this field and consider how these technologies can impact your organization or community.

[np_contact_btn]

Note: Provide a strategic conclusion reinforcing long-term business impact and keyword relevance.