Is The RAM AI-pocalypse Finally Over? Probably Not

- Understanding the ongoing challenges of RAM limitations in AI model development.

- Evaluating how memory optimization techniques are evolving but remain insufficient for large-scale AI.

- Exploring the impact of hardware constraints on AI scalability and performance.

- Identifying future trends that could mitigate the RAM bottleneck in artificial intelligence systems.

The rapid advancement of artificial intelligence has brought with it a series of technical hurdles, among which the so-called “RAM AI-pocalypse”—the crisis of insufficient memory capacity to run increasingly complex AI models—has been a persistent concern. Despite significant progress in both hardware and software, many experts argue that the core issue of limited memory resources is far from resolved.

This article delves into why the RAM bottleneck continues to challenge AI innovation, examines current strategies to overcome it, and discusses what the future holds for memory management in AI systems. Understanding these dynamics is crucial for businesses and developers aiming to harness the full potential of machine learning and deep learning technologies.

Continue Reading

What Is the RAM AI-pocalypse?

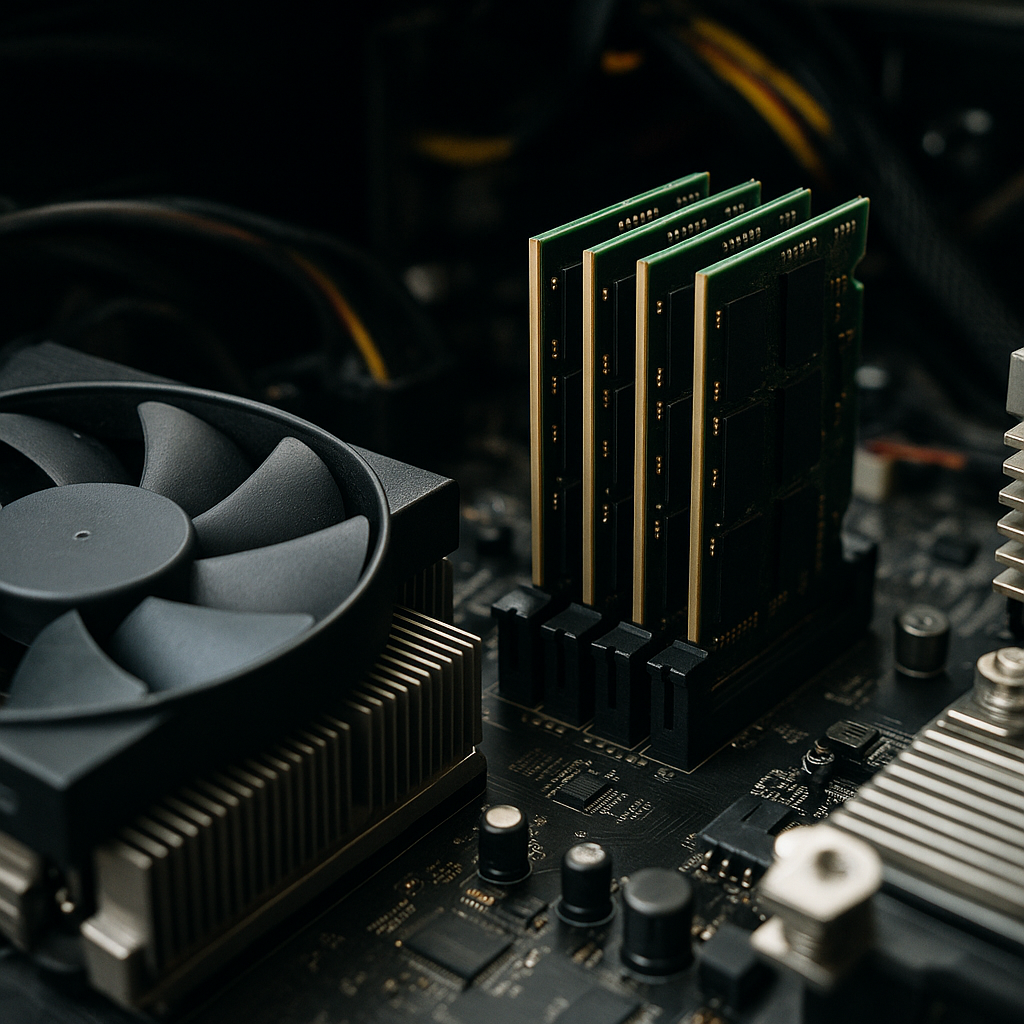

The RAM AI-pocalypse refers to the growing challenge faced by AI practitioners where the random access memory available in current computing systems is insufficient to support the training and deployment of cutting-edge AI models. As models become larger and more complex, their memory requirements grow exponentially, often outpacing hardware capabilities.

This phenomenon has led to significant bottlenecks in AI scalability, forcing developers to seek alternative solutions to manage or reduce memory consumption.

Why Is RAM Still a Bottleneck for AI?

At the core, AI models—especially those based on deep neural networks—require vast amounts of memory to store parameters, intermediate computations, and training data. The memory bandwidth and capacity of existing hardware often fall short, causing slowdowns or outright failures during training or inference.

Several factors contribute to this persistent issue:

- Model size explosion: State-of-the-art models like large language models (LLMs) can have billions or even trillions of parameters, demanding terabytes of memory.

- Limited hardware upgrades: While GPUs and TPUs have improved, their RAM capacity has not scaled proportionally with model complexity.

- Data-intensive training: Training on massive datasets requires temporary storage for batches, gradients, and activations, further straining memory.

Current Strategies to Mitigate RAM Constraints in AI

Despite these challenges, the AI community has developed several approaches to alleviate memory pressure:

- Model pruning and quantization: Reducing the size of models by eliminating redundant parameters or using lower-precision arithmetic to decrease memory footprint.

- Gradient checkpointing: Saving memory by selectively storing only some intermediate results during training and recomputing others as needed.

- Distributed training: Splitting models and data across multiple devices to share memory load, though this introduces communication overhead.

- Memory-efficient architectures: Designing models that inherently require less memory, such as sparse networks or transformer variants optimized for efficiency.

While these techniques have improved the situation, none fully eliminate the RAM bottleneck, especially as models continue to grow.

Hardware Innovations Addressing AI Memory Challenges

On the hardware front, manufacturers are pushing the boundaries to increase memory bandwidth and capacity:

- High-bandwidth memory (HBM) technologies provide faster data transfer rates between processors and memory.

- Next-generation GPUs and TPUs are being designed with larger onboard memory and optimized memory hierarchies.

- Specialized AI accelerators incorporate custom memory solutions to handle AI workloads more efficiently.

- Emerging memory technologies like non-volatile RAM (NVRAM) and 3D-stacked memory promise higher capacities and speeds.

However, these innovations come with increased costs and complexity, limiting immediate widespread adoption.

Is the RAM AI-pocalypse Over? The Reality Check

Despite these advances, the RAM AI-pocalypse is not over. The relentless growth in AI model sizes and data demands means that memory constraints remain a critical issue. Businesses and researchers must continue balancing model complexity with available resources.

Moreover, the trade-offs involved in memory optimization strategies—such as increased computation time or reduced accuracy—mean that solutions are often situational rather than universal.

Future Trends to Watch in AI Memory Management

Looking ahead, several promising directions could help alleviate the RAM bottleneck:

- Algorithmic breakthroughs: New training algorithms that reduce memory requirements without sacrificing performance.

- Hybrid memory architectures: Combining fast volatile memory with slower but larger non-volatile memory to optimize cost and capacity.

- Cloud-based AI platforms: Leveraging elastic cloud resources to dynamically allocate memory as needed, reducing local hardware constraints.

- AI-driven memory management: Using AI itself to optimize memory usage and predict bottlenecks dynamically.

These trends suggest that while the RAM AI-pocalypse is unlikely to end soon, its impact may be mitigated through innovation and smarter resource management.

Business Implications of the RAM AI-pocalypse

For enterprises investing in AI, understanding the memory challenge is essential for strategic planning. Key considerations include:

- Cost vs. performance trade-offs: Investing in high-memory hardware or cloud resources can increase costs but enable more powerful AI applications.

- Scalability planning: Anticipating memory needs as models evolve to avoid bottlenecks that delay deployment.

- Adopting memory-efficient AI tools: Choosing frameworks and models optimized for lower memory consumption to maximize ROI.

- Risk management: Preparing for potential delays or failures due to memory constraints during AI project execution.

How to Prepare Your AI Infrastructure for Memory Challenges

Organizations can take proactive steps to address the RAM bottleneck:

- Audit current AI workloads to identify memory-intensive processes.

- Invest in scalable hardware with expandable memory options.

- Leverage cloud platforms offering flexible memory allocation.

- Train teams on memory optimization techniques and best practices.

- Monitor emerging technologies and update infrastructure accordingly.

Conclusion: Navigating the RAM AI-pocalypse Era

While the RAM AI-pocalypse is not definitively over, understanding its nuances enables businesses and developers to navigate the evolving landscape effectively. Combining hardware upgrades, software optimizations, and strategic planning can help mitigate memory constraints and unlock the full potential of AI technologies.

Frequently Asked Questions

Call To Action

Enhance your AI projects by partnering with experts who specialize in memory optimization and scalable AI infrastructure to overcome the RAM bottleneck and accelerate innovation.

Note: Provide a strategic conclusion reinforcing long-term business impact and keyword relevance.